AI safety and research firm Anthropic has unveiled a significant breakthrough in post-training model control, introducing a new technique that allows for precise, “surgical” edits to the behavior of large language models. This method, rooted in a concept called “dictionary learning,” could revolutionize how developers address flaws, biases, and vulnerabilities in powerful AI systems like the company’s own Claude model.

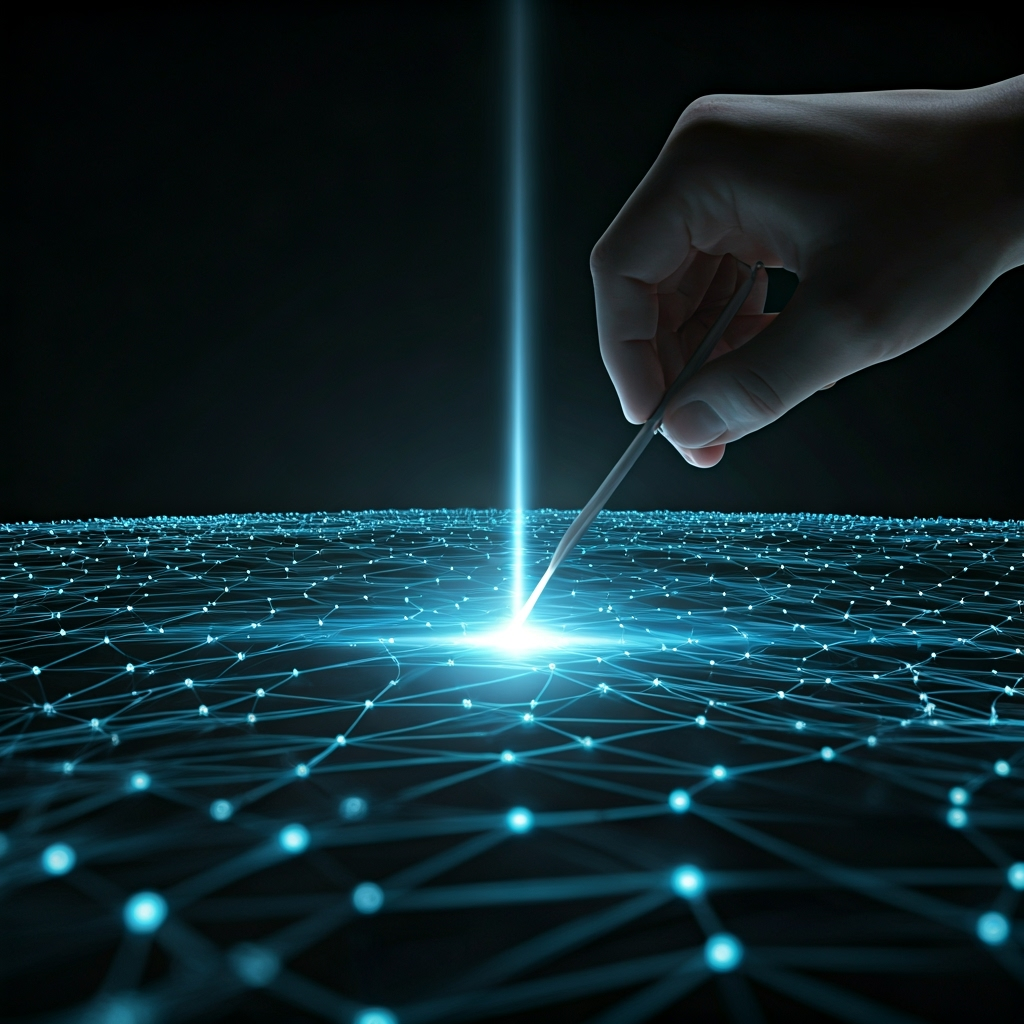

Traditionally, altering the fundamental behavior of a trained AI model—such as removing a newly discovered security risk or a specific harmful bias—requires a costly and time-consuming process of retraining the entire system on a revised dataset. Anthropic’s new approach bypasses this entirely. By mapping the vast, complex network of a model’s internal parameters to an understandable, human-readable “dictionary” of features, researchers can pinpoint and modify the specific concepts that govern certain behaviors. For example, they could identify the feature corresponding to a vulnerability that allows “jailbreak” prompts and deactivate it without degrading the model’s performance on other tasks.

In a paper accompanying the announcement, Anthropic researchers demonstrated the ability to remove specific dangerous capabilities and undesirable biases from their models with surgical precision. This level of granular control represents a major step forward for AI safety and alignment. It provides a pathway for developers to rapidly patch issues as they emerge, making AI systems more robust and trustworthy. As AI models become increasingly integrated into critical applications, the ability to perform these targeted interventions will be essential for managing their behavior and ensuring they operate as intended. This research moves the industry beyond broad-strokes fine-tuning and toward a more sophisticated era of AI maintenance and control.