The competitive landscape of generative AI video has a powerful new contender. Chinese technology giant Kuaishou, a primary rival to TikTok-owner ByteDance, has officially unveiled Kling, a sophisticated text-to-video model that directly challenges industry leaders like OpenAI’s Sora, Google’s Veo, and Pika Labs. The model has been released in a public beta for users in China.

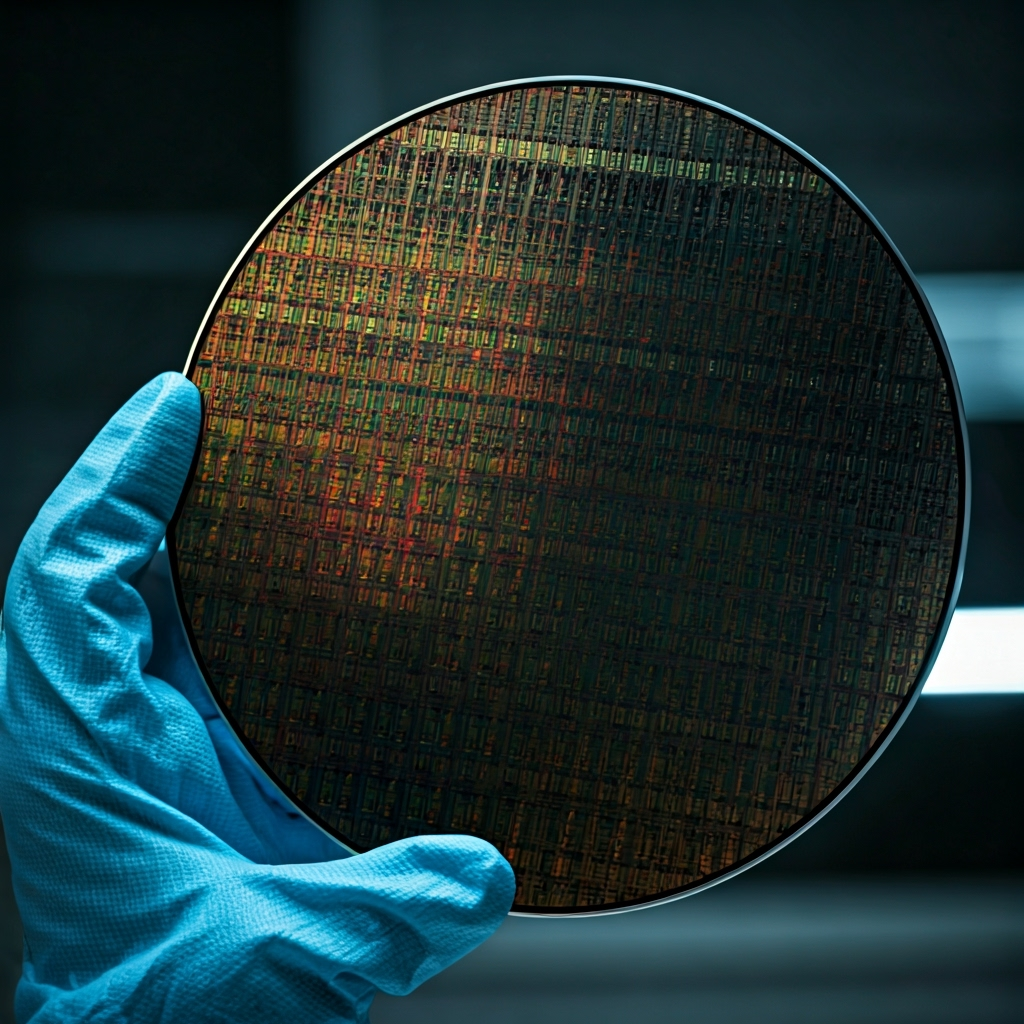

Kling’s capabilities mark a significant step forward in the field, boasting the ability to generate up to two minutes of video at 1080p resolution and 30 frames per second. This duration matches the publicly demonstrated, though not yet released, capabilities of Sora and exceeds many existing tools. The model is built on a Diffusion Transformer architecture, similar to the one powering OpenAI’s model, enabling it to produce complex, high-fidelity, and physically plausible scenes.

A key differentiator highlighted by Kuaishou is Kling’s advanced understanding of physics. The model can simulate real-world motion and interactions, such as the way a car kicks up dust on a gravel road or how light reflects off different surfaces. The company has released several demonstration videos showcasing these abilities, including a child riding a bike through a flower garden and a man eating noodles, which have impressed AI researchers with their realism and coherence over a long duration.

The launch of Kling underscores the rapid acceleration of AI development within China’s tech ecosystem. While US-based companies like OpenAI and Google have often been seen as the frontrunners in generative AI, Kling’s emergence proves that state-of-the-art innovation is happening globally. As the race to build the most capable and accessible AI video tools intensifies, Kling positions Kuaishou as a formidable player, heating up the competition to define the future of digital content creation.